Share this link via:

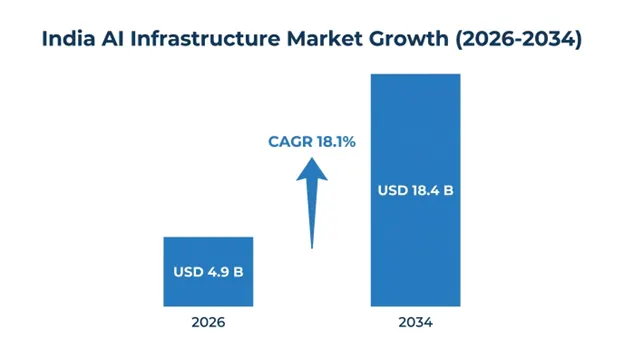

The AI Infrastructure market in India was valued at USD 3.8 billion in 2025 and is anticipated to reach USD 18.4 billion by 2034, growing at a CAGR of 18.1%. The market is one of the fastest-growing AI infrastructure markets in the world. due to digital transformation, increased cloud adoption, growth in enterprise AI implementation, and the presence of various initiatives by the government for the development of sovereign AI capabilities. In terms of computing infrastructure, the country’s AI-optimized data centers had a capacity. of 1,200-1,400 MW in 2025 and is poised to grow significantly until 2034.

Executive Summary:

AI Infrastructure refers to the complete set of technological components that are needed to create, train, deploy, and operate artificial intelligence solutions. The infrastructure layer consists of sophisticated computing devices like GPUs, TPUs, FPGAs, and customized AI chips designed specifically for deep learning tasks and massive data processing. They are coupled with ultra-fast network technologies, massive data centers, efficient storage mechanisms, and management software for performing distributed training and real-time inference of AI models.

India is strategically important. for the worldwide AI industry because of its well-developed information technology services, rapidly growing digital economy, huge community of developers, and greater focus on domestic AI advancements. The government-backed initiatives such as the IndiaAI Mission. have further accelerated the growth of AI infrastructure through investment in national AI computing infrastructure, GPU computing infrastructure, research ecosystem, and AI model development domestically. Market growth is further bolstered. by rising adoption of AI technologies in various enterprise sectors ranging from banking, healthcare, manufacturing, agriculture, retail, and the public sector.

According to a commercial perspective, AI infrastructure is establishing itself as an essential building block towards enabling various other digital initiatives for India, such as intelligent automation, multilingual AI development, financial inclusion, smart agriculture, and AI-enabled healthcare delivery. Furthermore, growing awareness regarding data sovereignty, domestic cloud infrastructure, and regulatory compliance has resulted in rising investments in local AI computing infrastructure.

| Report Coverage | Details |

|---|---|

| Base Year | 2025 |

| Base Year Value | USD 3.8 Billion |

| Forecast Value | USD 18.4 Billion |

| CAGR | 18.1% |

| Forecast Period | 2026-2034 |

| Historical Data | 2022-2025 |

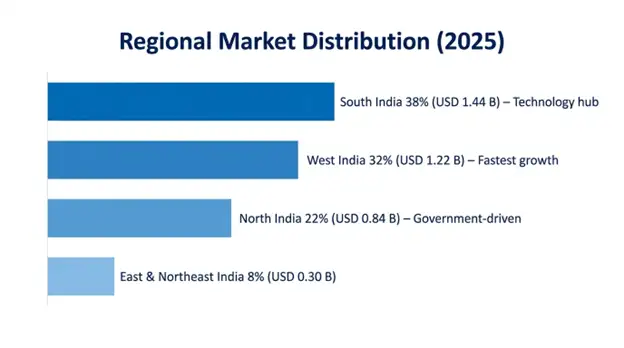

| Largest Market | South India |

| Fastest Growing Market | West India |

| Segments Covered | By Component, Hardware Type, Software, Deployment Model, Technology, End-Use Industry, Region |

| Countries Covered | South India, West India, North India, East & Northeast India |

| Key Market Playes | Yotta Data Services, CtrlS Datacenters, Tata Consultancy Services, Infosys, Amazon Web Services India, Microsoft Azure India, Google Cloud India, NVIDIA India |

Get more details on this report - Request Free Sample

The India AI Mission serves as the biggest catalyst behind India’s AI Infrastructure Market. The project is funded by the government with an allocation of INR 10,371.92 crore., and its goal is to develop highly advanced AI computing infrastructure and improve India's positioning within the global ecosystem of artificial intelligence. The India AI project is divided into several pillars that include AI computing infrastructure development., foundation model development, data set development, talent growth, startup ecosystem, applications, and AI governance framework. All these initiatives work in unison to overcome the existing issues related to infrastructural limitations and promote widespread engagement of the private sector.

One of the primary focus areas involves deploying more than 10,000 GPUs. using various kinds of PPPs to make advanced computing infrastructure accessible at affordable prices for startups, R&D institutions, universities, and government agencies, which will help break down entry barriers to AI experiments and native model training.

Some of the Indian states, such as Karnataka, Telangana, Tamil Nadu, Maharashtra, and Gujarat, are developing special policies, incentives, and innovation programs aimed at building their own AI infrastructures, thus improving visibility into future demand., scaling up hyperscale infrastructure, and enhancing the national AI ecosystem..

Government Initiative Impact Metrics:

Companies across various sectors in India are increasingly adopting AI capabilities. due to stiff competition, changing customer requirements, and the increasing realization that AI could transform business operations.. These developments are creating significant demand. for AI infrastructure to train models, process large volumes of data., and deploy real-time inference models within enterprise settings. The rapid adoption of generative AI and large language model technologies. is speeding up the AI deployments in businesses, thereby making them shift their focus from experimentation phases to commercial usage.

India's major IT services companies like Tata Consultancy Services, Infosys, Wipro, and HCL Technologies are jointly investing billions of dollars in developing AI infrastructure, GPU clusters, AI research centers, and proprietary enterprise AI platforms. The BFSI industry is one of the top players that has embraced AI technology in its operations, using the technology in advanced AI platforms for fraud detection, credit scoring, automated customer service, and managing regulatory compliance issues.

Enterprise Adoption Metrics:

The Indian market of AI-infrastructure is subject to several structural constraints due to heavy dependence on foreign-made AI semiconductors from global leaders in semiconductor manufacturing operating in Taiwan and South Korea. Import-dependence is exposing the market to supply risks, geopolitical uncertainties, higher prices related to importing semiconductor hardware, and currency volatility linked to the USD value of such purchases. The shortage of high-quality AI chips, especially advanced GPUs used for large language models and generative AI projects, has resulted in long procurement time and hardware shortages on global scale.

Indigenous corporations and hyperscalers struggle to access. scarce semiconductor components in competition with prominent tech corporations on global level, and this situation is leading to high costs of infrastructure deployments and delays in launching AI projects at scale. Despite the Government of India actively investing in semiconductor development. via Indian Semiconductor Mission and significant incentives to support this industry, current government initiatives are concentrated on the development of manufacturing facilities for chip assembly, packaging, and testing, but not on more advanced processes of fabricating advanced chips.

Supply Chain Impact Metrics:

India’s linguistic and cultural diversity. presents a very interesting case for the development of native AI models that can serve underserved market segments not adequately addressed by global foundation models., such as multilingual solutions that can cater to India’s 22 languages and numerous dialects used by over 1.4 billion people. The development of sovereign AI models capable of serving. to Indian languages, cultures, regulations, and use cases is both a strategic imperative and a business opportunity.

The model development initiatives under the IndiaAI Mission., coupled with the innovation efforts of Indian language AI in startups such as Sarvam AI, Krutrim, and other innovative ventures, are generating continuous demand for training infrastructure that can deal with multilingual data, tokenization needs, and contextual issues. This would require significant computational power. both during the training process as well as the ongoing fine-tuning process of these models to suit various sectors such as healthcare, education, agriculture, and government services.

Applications of government services delivery systems using regional languages for more than 180+ citizen-focused digital services generate continuous demand for indigenously developed AI solutions that cannot be catered to by global models developed primarily in English..

Sovereign AI Opportunity Metrics:

India’s vast geographic footprint. with its 640,000 villages, 8,000 plus towns, and 53 metros, there are many possibilities available to build edge AI infrastructure to serve applications that require on-site processing, lower latency, and low bandwidth consumption. The deployment of edge AI infrastructure ranges from manufacturing processes, quality checks, agriculture, diagnostics in healthcare applications, traffic management, and even retail analytics where real-time requirements make cloud inference impractical. or uneconomical.

With the expansion of 5G network deployments across India., alongside IoT devices, smart cities projects, the infrastructure for building edge AI capability is being laid. Telecommunications companies are deploying edge nodes of computing power near the cell tower and central office premises which creates opportunities for deployment. of AI inference infrastructure near application sites.

Industrial use cases, like manufacturing quality inspection, predictive maintenance and autonomous systems all need ruggedized edge AI hardware that can operate reliably in harsh environments., while still delivering real-time inference.. In India, the manufacturing sector is made up of more than 63 million micro small, and medium enterprises and it represents a large yet underserved market. for cost effective edge AI offerings.

Edge AI Infrastructure Metrics:

The extraordinary power density, and thermal output characteristics of today’s AI hardware are sort of forcing a shift in how data centers are designed and how cooling tech is being adopted across India’s AI infrastructure ecosystem. Traditional air-cooling systems., are becoming insufficient. when you look at AI server clusters pulling in the range of 40–100 kilowatts per rack, so operators are moving toward more advanced liquid cooling approaches, including direct-to-chip cooling, rear-door heat exchangers, and immersion cooling setups. Those options can manage thermal loads more effectively, while keeping energy efficiency relatively intact.

In India, data center operators are increasingly putting liquid cooling into new AI-optimized facilities, and the penetration of liquid cooling across AI infrastructure is expected to climb to around 58% by 2028, versus under 12% in more conventional data centers. Basically, these cooling improvements support higher rack densities. They also cut cooling energy consumption by about 35–45% compared with traditional air cooling, plus they help hardware stay reliable in India’s tougher ambient temperature conditions.

At the same time, sustainability is becoming a central thread in AI infrastructure decisions. That’s coming from corporate environmental commitments, operational cost optimization, and regulatory expectations all at once. Operators are co-locating AI sites with renewable energy sources, rolling out more capable power management systems, and designing facilities specifically for maximum energy efficiency, to handle the real and substantial environmental impact from AI computing workloads.

The high capital requirements for deploying AI infrastructure are pushing forward the development of specialized AI as a Service platform, so enterprises can reach sophisticated computing power via operational expenditure style plans, not by upfront capital investment. In the domestic market, providers such as Yotta Data Services, E2E Networks, and others, are building dedicated GPU cloud offerings that come with bare-metal instances, pre-configured AI development environments, and managed AI services, all aimed at enterprises that cannot really justify building internal infrastructure.

In practice, these platforms tackle the main “market entry” friction points by enabling scalable, on demand access to costly AI hardware, while also supporting higher utilization rates so the infrastructure providers can see better return on investment. The shared infrastructure angle additionally helps smaller firms, startups, and even academic institutions, get capabilities that used to be basically locked behind big corporate budgets and serious capital resources.

South India holds the biggest regional market share, around 38% valued at USD 1.44 billion in 2025, mostly anchored on Karnataka and yes, Bangalore continues to serve as India’s leading technology hub.. It hosts over 400 multinational technology company offices, 1,200+ startups, and it also has the highest concentration of AI talent in the country. The region also benefits from premier educational institutions. like Indian Institute of Science, Indian Statistical Institute, plus several National Institutes of Technology, all together producing exceptional technical talent, while established technology industry infrastructure and a full business ecosystem back AI innovation.

Telangana’s Hyderabad has emerged as. a major AI infrastructure hub, with major global capability centers from Microsoft, Google, Amazon, Apple and a long list of technology companies. Many of them set up dedicated AI research facilities and continue making substantial infrastructure investments.. On top of that, the state government supports things with a proactive AI policy framework, including T-Hub innovation ecosystem and dedicated AI cluster development initiatives, which makes Hyderabad widely seen as India’s second most important AI infrastructure market.

Tamil Nadu, especially Chennai, is showing fast AI infrastructure growth, driven largely by automotive sector digitalization, manufacturing automation, and government-supported data center building. That also pulls in hyperscale investments, and the coastal location matters too since it provides submarine cable connectivity and relatively stable power infrastructure.

South India Regional Metrics:

West India holds a 32% regional market share, at USD 1.22 billion in 2025, and honestly it makes sense since Mumbai stays in a strong position as India’s financial capital. This keeps pushing sophisticated BFSI sector AI infrastructure needs, plus Maharashtra’s industrial base contributes significantly. and then Gujarat’s newer technology corridors too. Mumbai’s concentration of banking headquarters, insurance companies, capital market participants, and fintech firms creates. the most demanding enterprise AI infrastructure requirements across India. The key focuses are real-time inference., regulatory compliance, data governance, and high availability specifications.

The region benefits from India most developed data center ecosystem. Mumbai is basically the primary submarine cable landing point for international connectivity, and it also hosts most of the hyperscale cloud provider infrastructure. Meanwhile, Maharashtra industrial clusters in Pune, Nashik, and Aurangabad are pushing edge AI adoption for manufacturing use cases. Then Gujarat’s renewable energy capacity backs more sustainable AI infrastructure development.

North India shows strong growth potential, with about 22% market share worth USD 836 million in 2025, and it’s largely driven by Delhi NCR’s concentration of govt ministries, public sector undertakings, and big enterprise headquarters, all putting AI infrastructure in place for digital service delivery transformation. This area also gets a boost from central government AI initiatives such as the India AI Mission rollout, plus work on digital public infrastructure, where a significant portion of computing infrastructure investment is funneled. into the national capital region.

Meanwhile, Uttar Pradesh has its own ambitious digital transformation pushes, and the growing tech ecosystems in Noida and Lucknow add to that. With policy support from the government and a large population base, there are meaningful chances for AI infrastructure installation to serve both government use and commercial applications.

Component Insights

Hardware dominates the market with a 64% share., worth USD 2.43 billion in 2025, and it includes AI processors and accelerators, servers alongside storage systems, networking equipment and even cooling infrastructure. Together, they form the physical foundation enabling AI computing.. This hardware segment keeps a strong 19.2% CAGR all the way through 2034, pushed by ongoing tech advancements, the need for bigger capacity, and the replacement cycles that come with rapidly advancing AI hardware.

Software represents 22% of the market, reaching USD 836 million in 2025. It spans AI platforms and frameworks, MLOps tools, data management software, plus infrastructure orchestration systems so hardware resources can be used effectively. Then services are 14% of the market at USD 532 million, and they show the top CAGR of 24.1% through 2034. These services cover consulting, integration, managed services, and training programs, which help support deploying and fine-tuning AI infrastructure in practice.

Hardware Type Insights

AI processors and accelerators are set to take up around 58% of the hardware segment value, reaching USD 1.41 billion in 2025. This is mostly driven by graphics processing units, mainly from NVIDIA and AMD, plus a growing set of specialized AI chips that are tuned for workload types. Meanwhile, servers together with storage systems contribute about 26% of the hardware value, landing at roughly USD 631 million, and networking equipment comes in at 16% or USD 389 million. This highlights the importance of high-throughput interconnects. for distributed AI training tasks.

Deployment Model Insights

Cloud-based deployment accounts for approximately 56% of the market. at USD 2.13 billion in 2025, and it kind of shows enterprise preference for operational expenditure style models, plus easier access to newer capabilities, without needing capital investment. On-premises deployment is at 28% market share totaling USD 1.06 billion, mainly for organizations that care about data sovereignty, have strict regulatory compliance mandates, or want specialized performance features.

Hybrid deployment sits at 16% market share, around USD 608 million, and it’s the fastest growing segment, growing at a 22.4% CAGR through 2034, because more sophisticated companies build integrated architectures where cloud resources support training workloads while the on-premises inference infrastructure handles production applications, especially when there’s a need for data locality and consistency.

Technology Insights

Generative AI infrastructure is the fastest growing technology segment, sitting at 34.2% CAGR all the way through 2034, with expansion from USD 912 million in 2025 driven mostly by large language model rollouts, multimodal AI use cases, and foundation model tailoring needs which end up creating a somewhat disproportionate compute demand. In the meantime, machine learning infrastructure holds about 42% of the market at USD 1.60 billion, and it supports established predictive intelligence, smart recommendation systems, and classification workloads.

Deep learning infrastructure meanwhile represents 31% market share at USD 1.18 billion, with use across computer vision, natural language processing, and more advanced analytics, all of which demand hefty training computer resources, especially when models need repeated iteration.

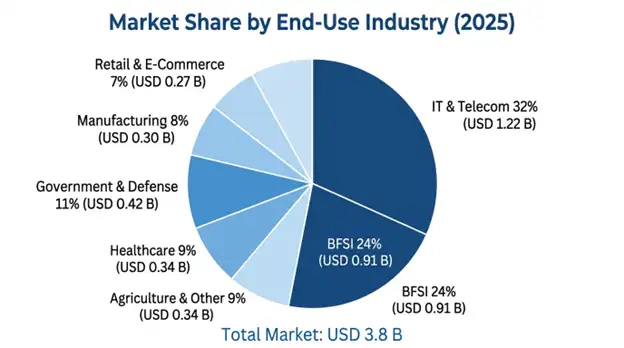

End-Use Industry Insights

IT and telecom basically are the largest end use segment, landing around 32% of market share, valued at USD 1.22 billion in 2025. It covers technology service companies, software development firms, and telecommunications operators that are deploying AI infrastructure to support service delivery, network optimization, and product development. BFSI takes the next chunk at 24% market share, reaching USD 912 million, and it reflects more complex enterprise AI infrastructure needs, like fraud detection credit analytics, regulatory compliance, and customer experience applications.

The government and defense segment demonstrates strong momentum., about 18.8% CAGR through 2034. It grows from USD 418 million in 2025, and is pushed by digital service delivery transformation, citizen engagement platforms, and national security use cases that need sovereign AI capabilities.

The India AI infrastructure market is highly competitive and fragmented., where global hyperscale cloud providers, they dominate the market. in cloud-based AI infrastructure delivery, while international hardware manufacturers still matter a lot by supplying AI tuned computing components. Then you’ve got domestic data center operators giving specialized AI infrastructure services, and newer Indian technology companies building indigenous AI platforms and solutions that feel more homegrown. In practice, Amazon Web Services, Microsoft Azure, and Google Cloud together bring in around 58% of cloud AI infrastructure revenue, because they offer broad service portfolios, they already have long standing enterprise relationships.

On the domestic side, infrastructure players like Yotta Data Services, CtrlS Datacenters, and Tata Communications tend to focus on sovereign AI infrastructure, data center services, and those specialized hosting solutions. They lean on regulatory, local market familiarity, and some solid government relationship advantages, which gives them leverage. They’re also working on AI optimized facilities and services aimed at enterprises that need data residency compliance, plus customized service levels, and local support that’s responsive.

Indian technology service firms such as TCS, Infosys, Wipro, and HCL Technologies primarily operate in AI infrastructure consulting., integration services, and managed operations, rather than direct infrastructure provision. They basically turn their deep enterprise relationships and domain expertise into value when infrastructure is being deployed or when it needs performance tuning and ongoing optimization work.

March 2026: The Yotta Data Services built the biggest privately-owned AI computing center in India with 6,000 graphics processing units (NVIDIA H100), thus creating an AI capacity of 180 MW intended for businesses and governments demanding sovereign computing.

February 2026: The Ministry of Electronics and Information Technology introduced IndiaAI compute infrastructure in Pune, giving academics, startups, and research organizations access to 3,000 graphics processing units (GPU) at a heavily discounted rate of INR 120 per hour.

January 2026: Microsoft Azure extended its Indian AI regions by adding dedicated capacity in Chennai and Hyderabad with 8,500 AI-optimized instances and committed an additional investment of INR 7,200 crore in infrastructure upgrades until 2027.

December 2025: CtrlS Datacenters completed the building of its liquid-cooled AI data center in Mumbai with advanced thermal management technologies capable of accommodating rack densities of up to 100 kilowatts for high-performance AI computing services in financial services firms.

November 2025: Infosys inaugurated its AI infrastructure services platform on domestic cloud infrastructure empowering enterprise clients to customize their machine learning models with data sovereignty assurance and complete regulatory compliance documentation.

List of Key Players in India AI Infrastructure Market

India AI Infrastructure Market Segments

By Component:

By Hardware Type:

By Software:

By Deployment Model:

By Technology:

By End-Use Industry:

By Region:

You'll get the sample you asked for by email. Remember to check your spam folder as well. If you have any further questions or require additional assistance, feel free to let us know via-

+1 724 648 0810 +91 976 407 9503 sales@intellectualmarketinsights.com

15 May 2026